Outstanding Paper Award at ICLR 2025

We are pleased to announce that our research paper, Masked Generative Priors Improve World Models Sequence Modelling Capabilities, has received an Outstanding Paper Award at the World Models Workshop of the International Conference on Learning Representations ICLR2025. This recognition from a leading conference in deep learning is a strong validation of our team’s work in advancing model-based reinforcement learning.

By building an internal simulation, or “imagination” of the world, World Models can predict future outcomes and learn complex behaviors with far less real-world interaction, tackling one of the biggest hurdles in AI: data efficiency. The quality of these imagined scenarios, however, depends entirely on the accuracy of the world model.

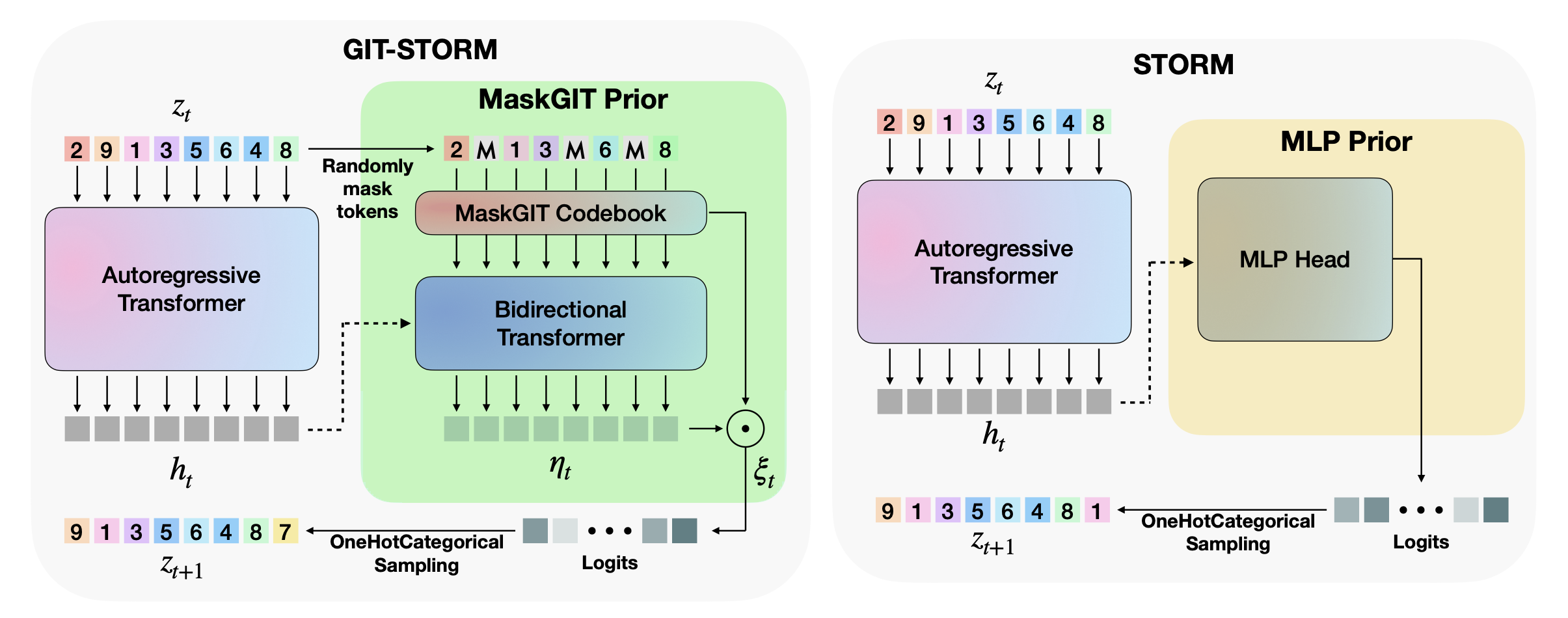

Our paper introduces a new architecture, GIT-STORM, that improves the predictive accuracy of these models.

The Challenge: Improving AI’s Predictive Models

World Models rely on dynamics modules to autoregressively predict future states. When using the wrong inductive bias this can lead to compounding errors and “hallucinations”, where the predicted future becomes increasingly detached from reality. Furthermore, because they were primarily designed for discrete data a significant gap existed in applying transformer-based world models to continuous actions environments (e.g., robotic manipulators).

Our Solution: GIT-STORM

GIT-STORM addresses these issues with two main contributions:

-

A Masked Generative Prior (MaskGIT Prior): The architecture builds on the existing STORM model but replaces its standard MLP prior with a MaskGIT prior. This approach masks parts of the future state and learns to predict the missing pieces from the surrounding context. This allows the model to better capture the global context, leading to more consistent and accurate predictions.

-

A State Mixer for Continuous Control: To work with continuous action spaces, we use a state mixer function. This module effectively combines the model’s categorical internal state with continuous action inputs. This is the first application of a categorical transformer-based world model to continuous control environments.

Results

We evaluated GIT-STORM on two downstream tasks: reinforcement learning and video prediction.

-

Atari 100k Benchmark: In the discrete action environments of the Atari 100k benchmark, GIT-STORM showed considerable performance gains. It outperforms strong baselines like DreamerV3 and IRIS based on the Interquartile Mean (IQM) metric. Notably, in the game Freeway, GIT-STORM achieved a positive score, while other methods required ad-hoc solutions to do so.

-

DeepMind Control Suite (DMC): By successfully training on the DMC benchmark, we show the model’s effectiveness in this new domain. GIT-STORM consistently outperformed the baseline STORM model.

-

Video Prediction: Across both benchmarks, the model’s imagined trajectories had a lower Fréchet Video Distance (FVD), indicating better video quality and more realistic predictions.

Why It Matters

The innovations in GIT-STORM lead to more accurate and versatile world models. By improving sample efficiency and predictive power, this research is an important step toward using reinforcement learning agents in complex, real-world applications.

We are pleased with this outcome and the recognition from the ICLR community. We believe this work contributes to building more capable and general AI systems.

Cristian Meo

Cofounder, CEO